The robots.txt file is used by websites to communicate with web crawlers and search engine robots. It provides instructions about which parts of a website should or should not be accessed or crawled by these automated bots. It can be used to manage crawl budget as it ensures that crawlers focus on important pages rather than wasting resources on unimportant or redundant ones. Not all crawlers follow robots.txt directives, malicious bots probably will ignore them.

Administration

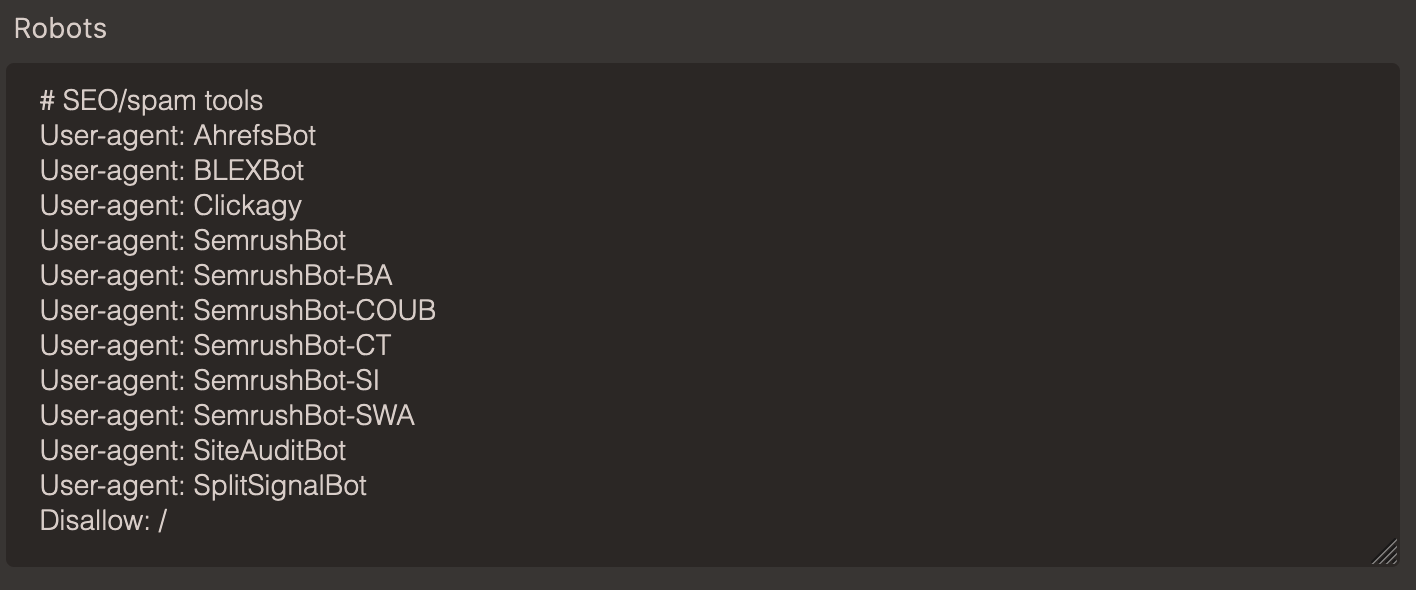

Under Site settings it is possible to modify the contents of the robots.txt file, which is always published under mydomain.com/robots.txt